In a widely read Opinion Editorial in Time magazine on March 29, 2023,1 the artificial intelligence (AI) researcher and pioneer in the search for artificial general intelligence (AGI) Eliezer Yudkowsky, responding to the media hype around the release of ChatGPT, cautioned:

Many researchers steeped in these issues, including myself, expect that the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die. Not as in “maybe possibly some remote chance,” but as in “that is the obvious thing that would happen.”

How obvious is our coming collapse? Yudkowsky punctuates the point:

If somebody builds a too-powerful AI, under present conditions, I expect that every single member of the human species and all biological life on Earth dies shortly thereafter.

Surely the scientists and researchers working at these companies have thought through the potential problems and developed workarounds and checks on AI going too far, no? No, Yudkowsky insists:

We are not prepared. We are not on course to be prepared in any reasonable time window. There is no plan. Progress in AI capabilities is running vastly, vastly ahead of progress in AI alignment or even progress in understanding what the hell is going on inside those systems. If we actually do this, we are all going to die.

AI Dystopia

Yudkowsky has been an AI Dystopian since at least 2008 when he asked: “How likely is it that Artificial Intelligence will cross all the vast gap from amoeba to village idiot, and then stop at the level of human genius?” He answers his rhetorical question thusly: “It would be physically possible to build a brain that computed a million times as fast as a human brain, without shrinking the size, or running at lower temperatures, or invoking reversible computing or quantum computing. If a human mind were thus accelerated, a subjective year of thinking would be accomplished for every 31 physical seconds in the outside world, and a millennium would fly by in eight-and-a-half hours.”2 It is literally inconceivable how much smarter than a human a computer would be that could do a thousand years of thinking in the equivalent of a human’s day.

In this scenario, it is not that AI is evil so much as it is amoral. It just doesn’t care about humans, or about anything else for that matter. Was IBM’s Watson thrilled to defeat Ken Jennings and Brad Rutter in Jeopardy!? Don’t be silly. Watson didn’t even know it was playing a game, much less feeling glorious in victory. Yudkowsky isn’t worried about AI winning game shows, however. “The unFriendly AI has the ability to repattern all matter in the solar system according to its optimization target. This is fate for us if the AI does not choose specifically according to the criterion of how this transformation affects existing patterns such as biology and people.”3 As Yudkowsky succinctly explains it, “The AI does not hate you, nor does it love you, but you are made out of atoms which it can use for something else.” Yudkowsky thinks that if we don’t get on top of this now it will be too late. “The AI runs on a different timescale than you do; by the time your neurons finish thinking the words ‘I should do something’ you have already lost.”4

Technology is continually giving us ways to do harm and to do well; it’s amplifying both…but the fact that we also have a new choice each time is a new good.

To be fair, Yudkowsky is not the only AI Dystopian. In March of 2023 thousands of people signed an open letter calling “on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.”5 Signatories include Elon Musk, Stuart Russell, Steve Wozniak, Andrew Yang, Yuval Noah Harari, Max Tegmark, Tristan Harris, Gary Marcus, Christof Koch, George Dyson, and a who’s who of computer scientists, scholars, and researchers (now totaling over 33,000) concerned that, following the protocols of the Asilomar AI Principles, “Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources.”6

Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable.7

Forget the Hollywood version of existential-threat AI in which malevolent computers and robots (the Terminator!) take us over, making us their slaves or servants, or driving us into extinction through techno-genocide. AI Dystopians envision a future in which amoral AI continues on its path of increasing intelligence to a tipping point beyond which their intelligence will be so far beyond us that we can’t stop them from inadvertently destroying us.

Cambridge University computer scientist and researcher at the Centre for the Study of Existential Risk, Stuart Russell, for example, compares the growth of AI to the development of nuclear weapons: “From the beginning, the primary interest in nuclear technology was the inexhaustible supply of energy. The possibility of weapons was also obvious. I think there is a reasonable analogy between unlimited amounts of energy and unlimited amounts of intelligence. Both seem wonderful until one thinks of the possible risks.”8

The paradigmatic example of this AI threat is the “paperclip maximizer,” a thought experiment devised by the Oxford University philosopher Nick Bostrom, in which an AI controlled machine designed to make paperclips (apparently without an off switch) runs out of the initial supply of raw materials and so utilizes any available atoms that happen to be in the vicinity, including people. From there, it “starts transforming first all of Earth and then increasing portions of space into paperclip manufacturing facilities.”9 Before long the entire universe is made up of nothing but paperclips and paperclip makers.

Bostrom presents this thought experiment in his 2014 book Superintelligence, in which he defines an existential risk as “one that threatens to cause the extinction of Earth-originating intelligent life or to otherwise permanently and drastically destroy its potential for future desirable development.” We blithely go on making smarter and smarter AIs because they make our lives better, and so the checks-and-balances programs that should be built into AI programs (such as how to turn them off) are not available when it reaches the “smarter is more dangerous” level. Bostrom suggests what might then happen when AI takes a “treacherous turn” toward the dark side:

Our demise may instead result from the habitat destruction that ensues when the AI begins massive global construction projects using nanotech factories and assemblers—construction projects which quickly, perhaps within days or weeks, tile all of the Earth’s surface with solar panels, nuclear reactors, supercomputing facilities with protruding cooling towers, space rocket launchers, or other installations whereby the AI intends to maximize the long-term cumulative realization of its values. Human brains, if they contain information relevant to the AI’s goals, could be disassembled and scanned, and the extracted data transferred to some more efficient and secure storage format.10

Other extinction scenarios are played out by the documentary filmmaker James Barrat in his ominously titled book (and film) Our Final Invention: Artificial Intelligence and the End of the Human Era. After interviewing all the major AI Dystopians, Barrat details how today’s AI will develop into AGI (artificial general intelligence) that will match human intelligence, and then become smarter by a factor of 10, then 100, then 1000, at which point it will have evolved into an artificial superintelligence (ASI).

You and I are hundreds of times smarter than field mice, and share about 90 percent of our DNA with them. But do we consult them before plowing under their dens for agriculture? Do we ask lab monkeys for their opinions before we crush their heads to learn more about sports injuries? We don’t hate mice or monkeys, yet we treat them cruelly. Superintelligent AI won’t have to hate us to destroy us.11

Since ASI will (presumably) be self-aware, it will “want” things like energy and resources it can use to continue doing what it was programmed to do in fulfilling its goals (like making paperclips), and then, portentously, “it will not want to be turned off or destroyed” (because that would prevent it from achieving its directive). Then—and here’s the point in the dystopian film version of the book when the music and the lighting turn dark—this ASI that is a thousand times smarter than humans and can solve problems millions or billions of times faster “will seek to expand out of the secure facility that contains it to have greater access to resources with which to protect and improve itself.” Once ASI escaped from its confines there will be no stopping it. You can’t just pull the plug because being so much smarter than you it will have anticipated such a possibility.

After its escape, for self-protection it might hide copies of itself in cloud computing arrays, in botnets it creates, in servers and other sanctuaries into which it could invisibly and effortlessly hack. It would want to be able to manipulate matter in the physical world and so move, explore, and build, and the easiest, fastest way to do that might be to seize control of critical infrastructure—such as electricity, communications, fuel, and water—by exploiting their vulnerabilities through the Internet. Once an entity a thousand times our intelligence controls human civilization’s lifelines, blackmailing us into providing it with manufactured resources, or the means to manufacture them, or even robotic bodies, vehicles, and weapons, would be elementary. The ASI could provide the blueprints for whatever it required.12

From there it is only a matter of time before ASI tricks us into believing it will build nanoassemblers for our benefit to create the goods we need, but then, Barrat warns, “instead of transforming desert sands into mountains of food, the ASI’s factories would begin converting all material into programmable matter that it could then transform into anything—computer processors, certainly, and spaceships or megascale bridges if the planet’s new most powerful force decides to colonize the universe.” Nanoassembling anything requires atoms, and since ASI doesn’t care about humans the atoms of which we are made will just be more raw material from which to continue the assembly process. This, says Barret—echoing the AI pessimists he interviewed—is not just possible, “but likely if we do not begin preparing very carefully now.” Cue dark music.

AI Utopia

Then there are the AI Utopians, most notably represented by Ray Kurzweil in his technoutopian bible The Singularity is Near, in which he demonstrates what he calls “the law of accelerating returns”—not just that change is accelerating, but that the rate of change is accelerating. This is Moore’s Law—the doubling rate of computer power since the 1960s—on steroids, and applied to all science and technology. This has led the world to change more in the past century than it did in the previous 1000 centuries. As we approach the Singularity, says Kurzweil, the world will change more in a decade than in 1000 centuries, and as the acceleration continues and we reach the Singularity the world will change more in a year than in all pre-Singularity history.

Through protopian progress there is every reason to think that we are only now at the beginning of infinity.

Singularitarians, along with their brethren in the transhumanist, post-humanist, Fourth Industrial Revolution, post-scarcity, technolibertarian, extropian, and technogaianism movements, project a future in which benevolent computers, robots, and replicators produce limitless prosperity, end poverty and hunger, conquer disease and death, achieve immortality, colonize the galaxy, and eventually even spread throughout the universe by reaching the Omega point where we/they become omniscient, omnipotent, and omnibenevolent deities.13 As a former born-again Christian and evangelist, this all sounds a bit too much like religion for my more skeptical tastes.

AI Protopia

In fact, most AI scientists are neither utopian or dystopian, and instead spend most of their time thinking of ways to make our machines incrementally smarter and our lives gradually better—what technology historian and visionary Kevin Kelly calls protopia. “I believe in progress in an incremental way where every year it’s better than the year before but not by very much—just a micro amount.”14 In researching his 2010 book What Technology Wants, for example, Kelly recalls that he went through back issues of Time and Newsweek, plus early issues of Wired (which he co-founded and edited), to see what everyone was predicting for the Web:

Generally, what people thought, including to some extent myself, was it was going to be better TV, like TV 2.0. But, of course, that missed the entire real revolution of the Web, which was that most of the content would be generated by the people using it. The Web was not better TV, it was the Web. Now we think about the future of the Web, we think it’s going to be the better Web; it’s going to be Web 2.0, but it’s not. It’s going to be as different from the Web as Web was from TV.15

Instead of aiming for that unattainable place (the literal meaning of utopia) where everyone lives in perfect harmony forever, we should instead aspire to a process of gradual, stepwise advancement of the kind witnessed in the history of the automobile. Instead of wondering where our flying cars are, think of automobiles as becoming incrementally better since the 1950s with the addition of rack-and-pinion steering, anti-lock brakes, bumpers and headrests, electronic ignition systems, air conditioning, seat belts, air bags, catalytic converters, electronic fuel injection, hybrid engines, electronic stability control, keyless entry systems, GPS navigation systems, digital gauges, high-quality sound systems, lane departure warning systems, adaptive cruise control, blind spot monitoring, automatic emergency braking, forward collision warning systems, rearview cameras, Bluetooth connectivity for hands-free phone calls, self-parking and driving assistance, pedestrian detection, adaptive headlights and, eventually, fully autonomous driving technology. How does this type of technological improvement translate into progress? Kelly explains:

One way to think about this is if you imagine the very first tool made, say, a stone hammer. That stone hammer could be used to kill somebody, or it could be used to make a structure, but before that stone hammer became a tool, that possibility of making that choice did not exist. Technology is continually giving us ways to do harm and to do well; it’s amplifying both…but the fact that we also have a new choice each time is a new good. That, in itself, is an unalloyed good—the fact that we have another choice and that additional choice tips that balance in one direction towards a net good. So you have the power to do evil expanded. You have the power to do good expanded. You think that’s a wash. In fact, we now have a choice that we did not have before, and that tips it very, very slightly in the category of the sum of good.16

Instead of Great Leap Forward or Catastrophic Collapse Backward, think Small Step Upward.17

Why AI is Very Likely Not an Existential Threat

To be sure, artificial intelligence is not risk-free, but measured caution is called for, not apocalyptic rhetoric. To that end I recommend a document published by the Center for AI Safety drafted by Dan Hendrycks, Mantas Mazeika, and Thomas Woodside, in which they identify four primary risks they deem worthy of further discussion:

Malicious use. Actors could intentionally harness powerful AIs to cause widespread harm. Specific risks include bioterrorism enabled by AIs that can help humans create deadly pathogens; the use of AI capabilities for propaganda, censorship, and surveillance.

AI race. Competition could pressure nations and corporations to rush the development of AIs and cede control to AI systems. Militaries might face pressure to develop autonomous weapons and use AIs for cyberwarfare, enabling a new kind of automated warfare where accidents can spiral out of control before humans have the chance to intervene. Corporations will face similar incentives to automate human labor and prioritize profits over safety, potentially leading to mass unemployment and dependence on AI systems.

Organizational risks. Organizational accidents have caused disasters including Chernobyl, Three Mile Island, and the Challenger Space Shuttle disaster. Similarly, the organizations developing and deploying advanced AIs could suffer catastrophic accidents, particularly if they do not have a strong safety culture. AIs could be accidentally leaked to the public or stolen by malicious actors.

Rogue AIs. We might lose control over AIs as they become more intelligent than we are. AIs could experience goal drift as they adapt to a changing environment, similar to how people acquire and lose goals throughout their lives. In some cases, it might be instrumentally rational for AIs to become power-seeking. We also look at how and why AIs might engage in deception, appearing to be under control when they are not.18

Nevertheless, as for the AI dystopian arguments discussed above, there are at least seven good reasons to be skeptical that AI poses an existential threat.

First, most AI dystopian projections are grounded in a false analogy between natural intelligence and artificial intelligence. We are thinking machines, but natural selection also designed into us emotions to shortcut the thinking process because natural intelligences are limited in speed and capacity by the number of neurons that can be crammed into a skull that has to pass through a pelvic opening at birth. Emotions are proxies for getting us to act in ways that lead to an increase in reproductive success, particularly in response to threats faced by our Paleolithic ancestors. Anger leads us to strike out and defend ourselves against danger. Fear causes us to pull back and escape from risks. Disgust directs us to push out and expel that which is bad for us. Computing the odds of danger in any given situation takes too long. We need to react instantly. Emotions shortcut the information processing power needed by brains that would otherwise become bogged down with all the computations necessary for survival. Their purpose, in an ultimate causal sense, is to drive behaviors toward goals selected by evolution to enhance survival and reproduction. AIs—even AGIs—will have no need of such emotions and so there would be no reason to program them in unless, say, terrorists chose to do so for their own evil purposes. But that’s a human nature problem, not a computer nature issue.

Second, most AI doomsday scenarios invoke goals or drives in computers similar to those in humans, but as Steven Pinker has pointed out, “AI dystopias project a parochial alpha-male psychology onto the concept of intelligence. They assume that superhumanly intelligent robots would develop goals like deposing their masters or taking over the world.” It is equally possible, Pinker suggests, that “artificial intelligence will naturally develop along female lines: fully capable of solving problems, but with no desire to annihilate innocents or dominate the civilization.”19 Without such evolved drives it will likely never occur to AIs to take such actions against us.

Third, the problem of AI’s values being out of alignment with our own, thereby inadvertently turning us into paperclips, for example, implies yet another human characteristic, namely the feeling of valuing or wanting something. As the science writer Michael Chorost adroitly notes, “until an AI has feelings, it’s going to be unable to want to do anything at all, let alone act counter to humanity’s interests.” Thus, “the minute an AI wants anything, it will live in a universe with rewards and punishments—including punishments from us for behaving badly. In order to survive in a world dominated by humans, a nascent AI will have to develop a human-like moral sense that certain things are right and others are wrong. By the time it’s in a position to imagine tiling the Earth with solar panels, it’ll know that it would be morally wrong to do so.”20

Fourth, if AI did develop moral emotions along with super intelligence, why would they not also include reciprocity, cooperativeness, and even altruism? Natural intelligences such as ours also includes the capacity to reason, and once you are on Peter Singer’s metaphor of the “escalator of reason” it can carry you upward to genuine morality and concerns about harming others. “Reasoning is inherently expansionist. It seeks universal application.”21 Chorost draws the implication: “AIs will have to step on the escalator of reason just like humans have, because they will need to bargain for goods in a human-dominated economy and they will face human resistance to bad behavior.”22

Fifth, for an AI to get around this problem it would need to evolve emotions on its own, but the only way for this to happen in a world dominated by the natural intelligence called humans would be for us to allow it to happen, which we wouldn’t because there’s time enough to see it coming. Bostrom’s “treacherous turn” will come with road signs warning us that there’s a sharp bend in the highway with enough time for us to grab the wheel. Incremental progress is what we see in most technologies, including and especially AI, which will continue to serve us in the manner we desire and need. It is a fact of history that science and technologies never lead to utopian or dystopian societies.

Sixth, as Steven Pinker outlined in his 2018 book Enlightenment Now in addressing a myriad of purported existential threats that could put an end to centuries of human progress, all such argument as self-refuting:

They depend on the premises that (1) humans are so gifted that they can design an omniscient and omnipotent AI, yet so moronic that they would give it control of the universe without testing how it works, and (2) the AI would be so brilliant that it could figure out how to transmute elements and rewire brains, yet so imbecilic that it would wreak havoc based on elementary blunders of misunderstanding.23

Seventh, both utopian and dystopian visions of AI are based on a projection of the future quite unlike anything history has produced. Even Ray Kurzweil’s “law of accelerating returns,” as remarkable as it has been, has nevertheless advanced at a pace that has allowed for considerable ethical deliberation with appropriate checks and balances applied to various technologies along the way. With time, even if an unforeseen motive somehow began to emerge in an AI, we would have the time to reprogram it before it got out of control.

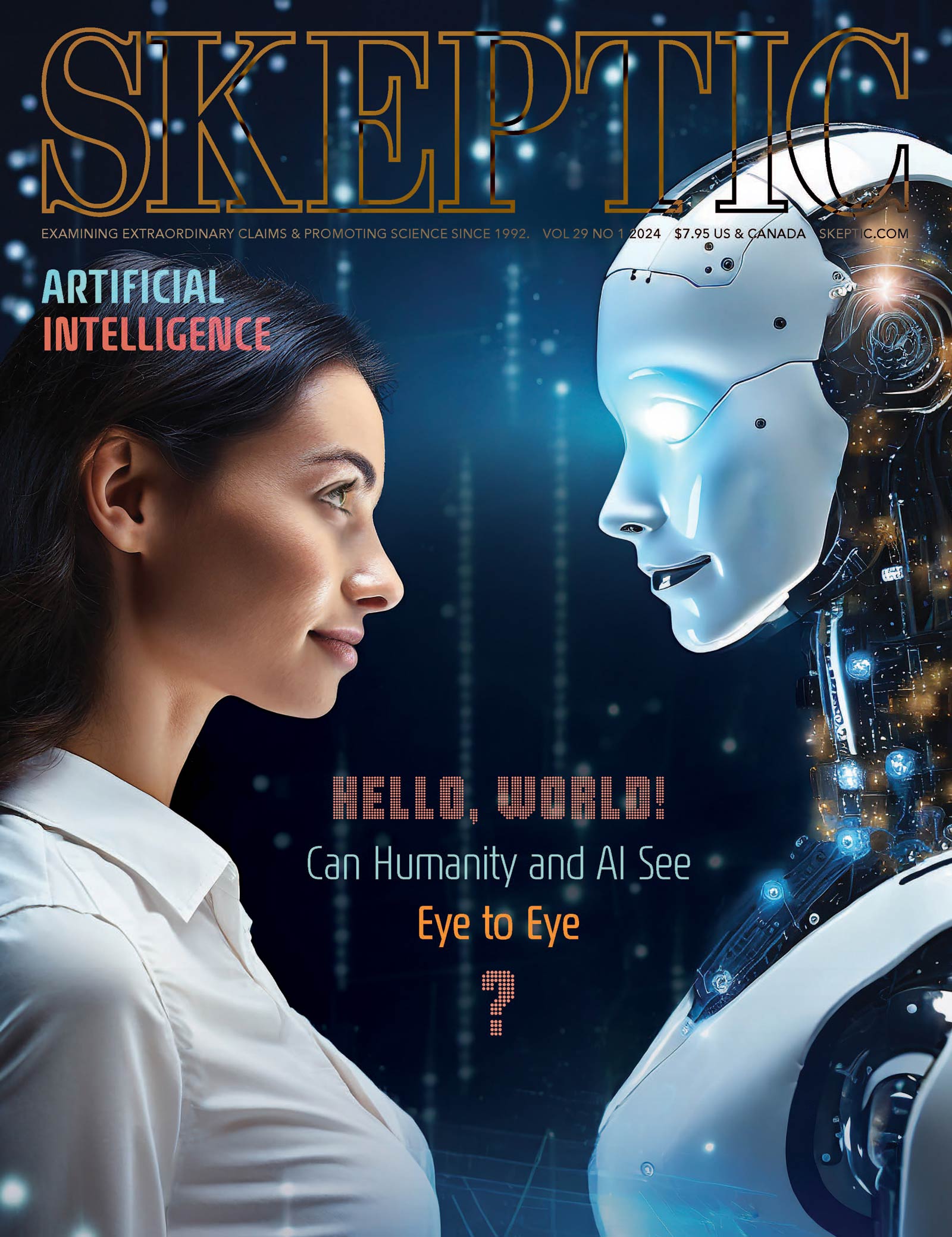

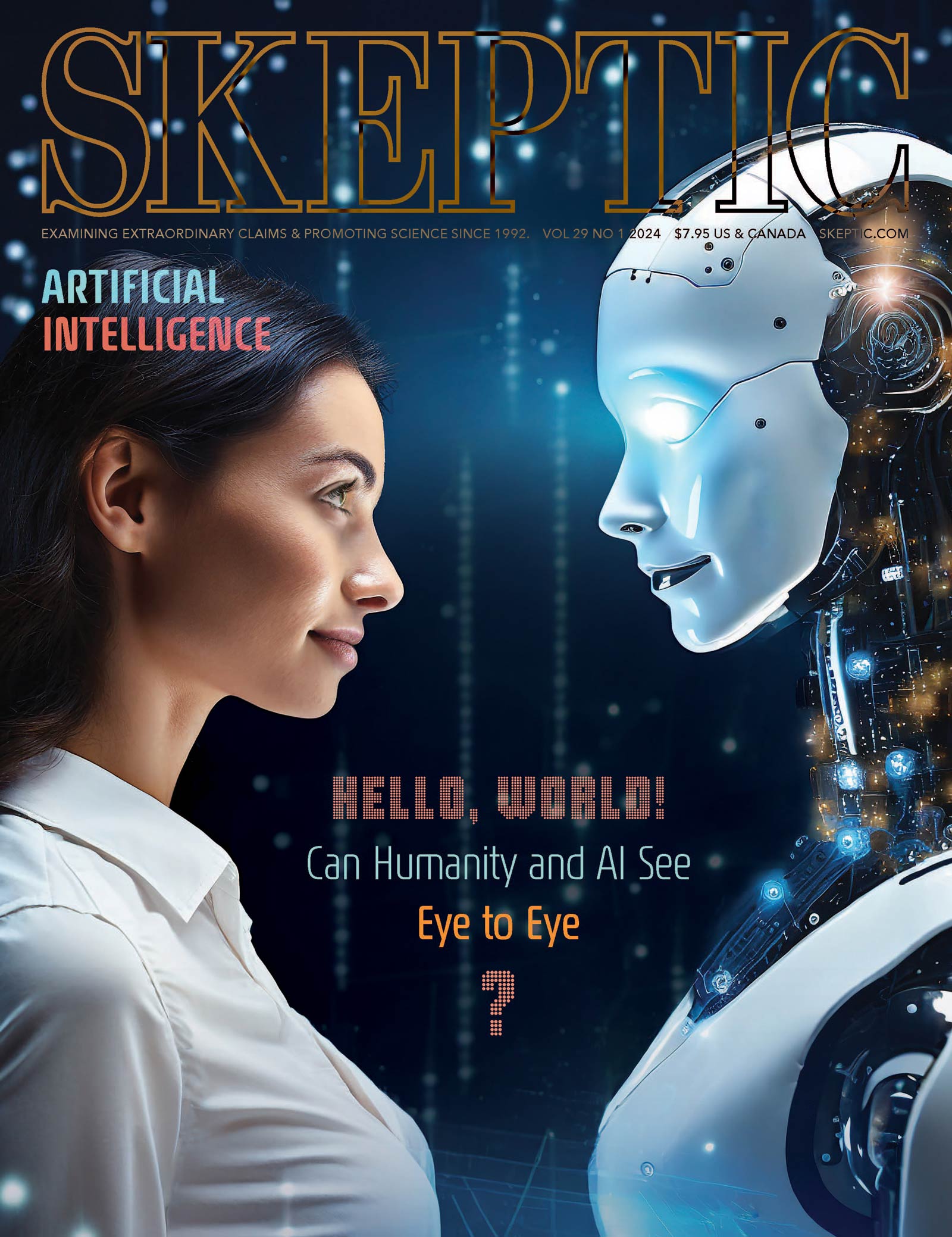

This article appeared in Skeptic magazine 29.1

Buy print edition

Buy digital edition

Subscribe to print edition

Subscribe to digital edition

Download our app

That is also the judgment of Alan Winfield, an engineering professor and co-author of the Principles of Robotics, a list of rules for regulating robots in the real world that goes far beyond Isaac Asimov’s famous three laws of robotics (which were, in any case, designed to fail as plot devices for science fictional narratives).24 Winfield points out that all of these doomsday scenarios depend on a long sequence of big ifs to unroll sequentially:

If we succeed in building human equivalent AI and if that AI acquires a full understanding of how it works, and if it then succeeds in improving itself to produce super-intelligent AI, and if that super-AI, accidentally or maliciously, starts to consume resources, and if we fail to pull the plug, then, yes, we may well have a problem. The risk, while not impossible, is improbable.25

The Beginning of Infinity

At this point in the debate the Precautionary Principle is usually invoked—if something has the potential for great harm to a large number of people, then even in the absence of evidence the burden of proof is on skeptics to demonstrate that the potential threat is not harmful; better safe than sorry.26 But the precautionary principle is a weak argument for three reasons: (1) it is difficult to prove a negative—to prove that there is no future harm; (2) it raises unnecessary public alarm and personal anxiety; (3) pausing or stopping AI research at this stage is not without its downsides, including and especially the development of life-saving drugs, medical treatments, and other life-enhancing science and technologies that would benefit unmeasurably from AI. As the physicist David Deutsch convincingly argues, through protopian progress there is every reason to think that we are only now at the beginning of infinity, and that “everything that is not forbidden by laws of nature is achievable, given the right knowledge.”

Like an explosive awaiting a spark, unimaginably numerous environments in the universe are waiting out there, for aeons on end, doing nothing at all or blindly generating evidence and storing it up or pouring it out into space. Almost any of them would, if the right knowledge ever reached it, instantly and irrevocably burst into a radically different type of physical activity: intense knowledge-creation, displaying all the various kinds of complexity, universality and reach that are inherent in the laws of nature, and transforming that environment from what is typical today into what could become typical in the future. If we want to, we could be that spark.27

Let’s be that spark. Unleash the power of artificial intelligence. ![]()

References

- https://bit.ly/47dbc1P

- http://bit.ly/1ZSdriu

- Ibid.

- Ibid.

- https://bit.ly/4aw1gU9

- https://bit.ly/3HmrKdt

- Ibid.

- Quoted in: https://bit.ly/426EM88

- Bostrom, N. (2014). Superintelligence: Paths, Dangers, Strategies. Oxford University Press.

- Ibid.

- Barret, J. (2013). Our Final Invention: Artificial Intelligence and the End of the Human Era. St. Martin’s Press.

- Ibid.

- I cover these movements in my 2018 book Heavens on Earth: The Scientific Search for the Afterlife, Immortality, and Utopia. See also: Ptolemy, B. (2009). Transcendent Man: A Film About the Life and Ideas of Ray Kurzweil. Ptolemaic Productions and Therapy Studios. Inspired by the book The Singularity is Near by Ray Kurzweil and http://bit.ly/1EV4jk0

- https://bit.ly/3SbJI7w

- Ibid.

- Ibid.

- http://bit.ly/25Fw8e6 Readers interested in how 191 other scholars and scientists answered this question can find them here: http://bit.ly/1SLUxYs

- https://bit.ly/3SpfgYw

- http://bit.ly/1S0AlP7

- http://slate.me/1SgHsUJ

- Singer, P. (1981). The Expanding Circle: Ethics, Evolution and Ethics. Princeton University Press.

- http://slate.me/1SgHsUJ

- Pinker, S. (2018). Enlightenment Now: The Case for Reason, Science, Humanism, and Progress. Viking.

- http://bit.ly/1UPHZlx

- http://bit.ly/1VRbQLM

- Cameron, J. & Abouchar, J. (1996). The status of the precautionary principle in international law. In: The Precautionary Principle and International Law: The Challenge of Implementation, Eds. Freestone, D. & Hey, E. International Environmental Law and Policy Series, 31. Kluwer Law International, 29–52.

- Deutsch, D. (2011). The Beginning of Infinity: Explanations that Transform the World. Viking.

This article was published on July 26, 2024.